Over the past few weeks our own platform was targeted by a mass-registration bot wave. The attack was simple in shape but loud in consequences, and dealing with it gave us a useful opportunity to put our own product through a real-world stress test.

A sign-up issue appears

The pattern was straightforward: bots hammering our signup form, creating accounts they never used. They didn’t confirm the verification email, didn’t log back in, didn’t touch a single feature. From a product standpoint they were inert — but the side effects were anything but.

Each registration triggered the same internal machinery a real signup would:

- Welcome flows

- Internal Slack alerts and notifications

- CRM events

- Lifecycle email flows, including follow-up and end of trial among others

- Analytics events (visits, conversion events)

Multiplied across thousands of fake accounts in a short window, this produced a constant background hum of noise that made it harder for the team to surface legitimate signups, and it quickly distorted the metrics we rely on day to day — funnel conversion rates, signup-to-activation curves, country-level acquisition reports and more. It quickly became annoying.

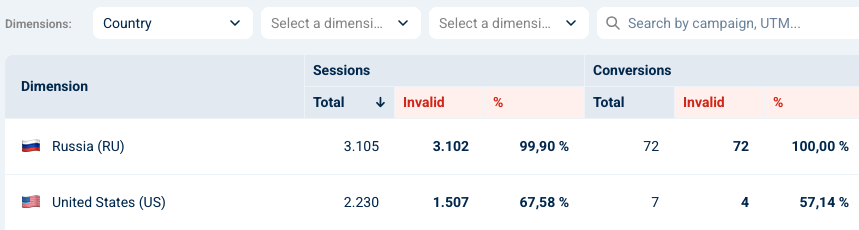

A sample of Opticks analytics showing almost 100% of both traffic and conversions were invalid in a given period of time

We could have reached for the obvious answers — a CAPTCHA, an email verification gate before account creation, hard rate limiting by IP. We chose not to. Friction at the top of the funnel is one of the most expensive things you can add to a SaaS product, and we already had a tool capable of telling humans and bots apart without asking either of them to prove anything, so that’s what we did: use Opticks.

A bot that was easy to bust

The bot wasn’t the most sophisticated we’ve had to uncover. It browsed with several tampering exposed and flagrant errors, such as:

- Displayed the User-Agent between quotes, such as “Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/142.0.0.0 Safari/537.36”

- Always browsed through datacenters and non-comercial ISP’s

- Used the same timezone (Europe/Moscow) and keyboard language (plain english)

- Mismatch errors in the User-Agent brands (i.e. signaled platform=Linux in allegedly Macbook User-Agent)

- Virtual graphics provider (Google Swiftshader)

We were confident in the detection, but a question arose: how could we act without impacting real users in any way?

The solution: Opticks callbacks and Ghost Tokens

Using the Opticks JS callback event, we wired the analysis result directly into the signup endpoint. So by the time the controller decided what to do, it knew whether the request was human or bot.

For confirmed bots we did something deliberately quiet: we returned a ghost login token. From the bot’s perspective the registration succeeded — same status code and same response shape. Behind the scenes, no row was written to the user table, no email was queued, no welcome flow fired, no Slack alert went out – well, actually a “bot registration” one in a specific-purpose channel that allowed us to measure and verify them. The bot walked away convinced it had registered an account, and our backoffice never knew it had been there.

To make the cleanup complete we also used the Opticks event JS callback on the client side. When a bot was identified, the callback fired and prevented our analytics tools from counting the session as a conversion. That kept GA, our internal warehouse and the marketing dashboards aligned with reality instead of with whatever the botnet wanted us to believe.

The net result: real users still go through a single-step registration with no extra hoops, bots get a convincing 200 OK and walk away, and our internal systems stay clean.

It wasn’t just us

Once we had a clear fingerprint of the attack on our own infrastructure, we looked across our client base — and the same botnet had been busy elsewhere. We identified traffic from this exact actor against more than 40 client domains. The breakdown of the largest affected segments looked like this in a 3-days span:

| Country (ISP) | Timezone | Keyboard | Affected customers | Sessions |

| Germany | Europe/Moscow | en-US;en | 44 | 1133 |

| Sweden | Europe/Moscow | en-US;en | 43 | 935 |

| Netherlands | Europe/Moscow | en-US;en | 40 | 685 |

| United States | Europe/Moscow | en-US;en | 32 | 379 |

| Austria | Europe/Moscow | en-US;en | 32 | 268 |

| Poland | Europe/Moscow | en-US;en | 28 | 240 |

| Indonesia | Asia/Jakarta | en-US;en | 2 | 238 |

| Luxembourg | Europe/Moscow | en-US;en | 30 | 162 |

| France | Europe/Moscow | en-US;en | 25 | 109 |

| Switzerland | Europe/Moscow | en-US;en | 22 | 90 |

The fingerprint is hard to miss once you stack the columns: residential ISPs spread across Western Europe and the US, but with the system clock pegged to Europe/Moscow on almost every session, and an entirely uniform en-US;en keyboard layout. That kind of geographic spread paired with a single timezone is a signature of a botnet running through datacenter and residential proxies on a small number of underlying machines — the proxy can change the apparent country, but it can’t change the timezone the underlying OS is reporting. The Indonesian row is the exception that confirms the rule: the one segment where the timezone matches the country, sitting on a different cluster of devices.

Wrapping up

What started as a noisy nuisance turned into a useful proof point for the approach we advocate for our clients: the best response to a sophisticated bot attack is often the one the bot never sees. By moving the decision upstream — into the signup endpoint itself – and letting our own detection layer make the call, we kept the user experience completely untouched for real people while quietly neutralizing the attack for everyone else. No CAPTCHA, no extra verification email, no rate-limit collateral damage. Just a verdict, a ghost token, and silence.

The broader takeaway is that bot mitigation isn’t really a security problem in isolation; it’s a data integrity problem with security overtones. Left unchecked, this kind of traffic doesn’t just clutter a backoffice — it corrupts the metrics teams use to make product decisions, distorts experimentation results, and slowly erodes trust in the dashboards everyone is looking at. Catching it at the door is the obvious win, but routing the verdict through analytics callbacks so the noise never reaches the warehouse is what keeps the rest of the business honest.

Leave a Reply